Published: April 13, 2026. The English Chronicle Desk.

The English Chronicle Online — Exploring the seismic intersection of technology and justice.

LONDON / NEW SCOTLAND YARD — In a significant and poignant move to address the “unprecedented” backlog of digital evidence, the Metropolitan Police has announced it is looking at using Artificial Intelligence to assist in child abuse cases. The “unfiltered” scale of modern investigations often involves a “vile” amount of data—millions of images and videos stored on seized devices—creating a logistical friction that can delay justice for years. By implementing a system update through AI, the Met aims to act as a Power Plant for its specialist units, allowing investigators to identify victims and perpetrators with remarkable speed while protecting officers from the “tectonic” mental health strain of manual viewing.

The initiative is seen as a landmark step in the “Science & Technology” of policing, though it has already sparked a very frank debate regarding the “History & Heritage” of civil liberties and the potential for a “technical glitch” in algorithmic judgment.

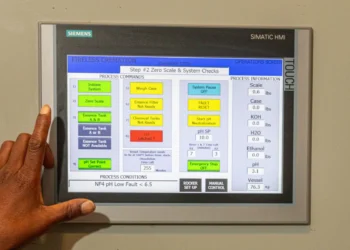

The Science & Technology behind the trial focuses on automated classification and the “remarkable” detection of known illegal materials.

Rapid Triage: The AI acts as a seismic filter, scanning terabytes of data to prioritize high-risk files, allowing the “Iron Horse” of the legal process to move significantly faster.

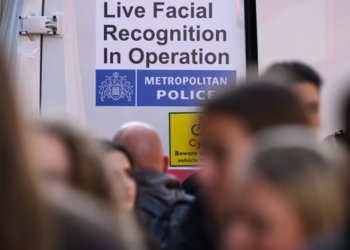

Victim Identification: Utilizing unfiltered facial recognition and object detection, the system can cross-reference “significant and poignant” clues across multiple cases to identify children in danger.

Protecting the Protectors: By reducing the time officers spend viewing “vile” content, the AI serves a critical Health & Wellness function, mitigating the “logistical friction” of secondary trauma.

The Life & Society impact of utilizing AI in such sensitive cases is a seismic concern for privacy advocates and legal experts.

Algorithmic Bias: Critics warn that a “technical glitch” or bias in the AI’s training data could lead to unprecedented errors in evidence processing, necessitating a “human-centered” oversight at every stage.

The ‘Digital Footprint’ of Privacy: There is a very frank discussion regarding the “History & Heritage” of surveillance, with calls for a system update in legislation to ensure AI is used as a “remarkable wisdom” tool rather than a mass-surveillance engine.

Justice for Victims: Supporters argue that the “logistical friction” of the current backlog is a vile failure of the social contract, and that AI represents the only Power Plant capable of matching the scale of digital crime in 2026.

As the World holds its breath for the results of the initial trial, the Met is positioning itself at the landmark frontier of 21st-century law enforcement. This isn’t just a “technical glitch” fix for an old problem; it is a seismic evolution of how we protect the most vulnerable in our “Life & Society.”

“We are using the Power Plant of AI to fight a ‘vile’ and ‘unprecedented’ evil,” a senior detective stated with unfiltered clarity. “This is a system update that saves lives by cutting through the ‘logistical friction’ of data. It is a significant and poignant tool for the modern age.”