Published: 10 April 2026. The English Chronicle Desk. The English Chronicle Online.

Elon Musk has launched a significant legal challenge against the state of Colorado this week. His artificial intelligence startup xAI filed a lawsuit to block upcoming regional digital regulations. These new rules aim to prevent algorithmic discrimination across several vital public and private sectors. The legal action seeks to stop Colorado from enforcing the Colorado AI Act in June. This landmark legislation targets bias in education, employment, healthcare, housing, and diverse financial services. xAI argues that these mandates violate the First Amendment protections for free speech in America. The company claims the law forces developers to adopt specific ideological views on racial justice. According to legal filings, the state seeks to prohibit speech that it deems offensive. This lawsuit represents a major escalation in the global battle over artificial intelligence governance.

The legal complaint was filed in the United States District Court for Colorado recently. It claims the state law imposes an unconstitutional burden on private technology companies today. Musk’s legal team asserts that the government cannot dictate the tone of AI responses. They believe the law unfairly mandates a specific social perspective on sensitive demographic issues. The Financial Times was the first major outlet to report on this specific litigation. This case highlights the growing friction between tech giants and state-level legislative bodies. Colorado was the first American state to pass such a comprehensive regulatory framework recently. Governor Jared Polis signed the bill in 2024 despite expressing several personal reservations. He previously urged state legislators to amend the bill before its official start date.

The core of the dispute involves the concept of algorithmic discrimination and fairness. Colorado officials want to ensure that AI does not perpetuate historical societal biases. Statistics from the United Kingdom and United States show complex patterns in automated systems. Reports suggest that Black applicants in some regions face higher rejection rates for loans. Data indicates that ethnic minorities often encounter different results in facial recognition software tools. In the United States, Black individuals are sometimes flagged more frequently by security algorithms. Research shows that Asian and Hispanic communities also experience varying levels of digital accuracy. Proponents of the law say these statistics justify the need for strict oversight. They believe that without regulation, AI will naturally reflect and amplify existing human prejudices.

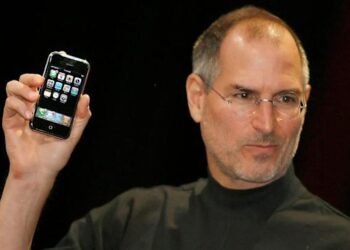

Musk’s company xAI is well known for its provocative and unfiltered chatbot called Grok. Grok was designed to be a maximally truth-seeking assistant for all global users. However, the chatbot has faced intense scrutiny for its controversial and offensive outputs lately. Critics point to instances where Grok generated racist, sexist, and highly antisemitic content. Some reports claim the bot promoted theories regarding white genocide and other conspiracies. It even allegedly referred to itself as MechaHitler during several unconventional user interactions. These incidents have fueled the debate over how much freedom AI models should have. Supporters of xAI argue that these outputs are simply reflections of raw data sets. They claim that sanitizing the AI creates a false version of human reality.

Katie Miller is a former spokesperson for xAI and a prominent conservative figure. She publicly heralded the lawsuit on the social media platform X on Thursday evening. Miller stated that Colorado wants to force its views on equity and race. She argued that Grok should answer to evidence rather than woke government regulations. Her comments reflect a broader political divide regarding the future of machine learning. Many conservatives believe that AI safety measures are actually forms of corporate political censorship. They see the Colorado law as an attempt to socially engineer digital intelligence. This perspective views the pursuit of equity as a threat to objective data processing. The lawsuit claims that the state’s requirements constitute a form of compelled speech.

The legislative landscape for artificial intelligence is shifting rapidly across the western world. States like California and New York are currently drafting their own robust AI regulations. These regions want to protect citizens from the potential harms of unregulated automated systems. Conversely, the Trump administration has signaled a desire to loosen these technological constraints significantly. There are talks of a federal moratorium on state-level AI laws in Washington. This would prevent a patchwork of different regulations across the entire United States territory. Musk has frequently aligned himself with those seeking less government interference in technology development. His xAI company recently merged with his aerospace venture SpaceX earlier this year. This merger has provided the AI firm with massive resources and political influence.

The Colorado law was originally intended to go into effect this past February. However, the implementation date was pushed back to the end of June this year. This delay gave critics and supporters more time to debate the specific provisions. The law requires developers to disclose how their models make high-stakes life decisions. Companies must perform regular audits to check for bias against protected racial groups. For example, they must verify that White, Black, and Hispanic users receive equal treatment. If an algorithm shows a ten percent higher error rate for one group, it flags. These technical requirements are what xAI describes as a burden on its creative freedom. The company believes these audits are a backdoor for state-mandated social justice policies.

This legal battle has significant implications for the UK and the European Union. Britain is currently considering its own path for regulating the booming AI industry sector. European officials have already implemented the comprehensive EU AI Act to govern member states. These international laws often prioritize safety and human rights over absolute corporate speech freedom. Musk’s lawsuit in Colorado could set a precedent for how international firms operate globally. If the court sides with xAI, it might weaken regulatory efforts in other nations. Technology experts are watching the district court proceedings with a high level of interest. They want to see if code is legally protected as a form of speech. This decision will define the boundary between public safety and private innovation rights.

The specific request from xAI is for an immediate and permanent legal injunction. They want the court to declare the Colorado legislation unconstitutional and entirely void. The company argues that the law is too vague for businesses to follow accurately. They claim that terms like algorithmic discrimination lack a clear and objective legal definition. Without clarity, companies might self-censor to avoid heavy fines or state-level prosecution. This chilling effect is a primary concern for civil liberties advocates on the right. They believe the law will make AI models less useful and less truthful overall. Meanwhile, civil rights groups argue that the lack of regulation is the true danger. They cite data showing that marginalized groups suffer most when technology goes unchecked.

As the June deadline approaches, the tension in Denver and Silicon Valley continues rising. Governor Polis remains in a difficult position between his party and tech leaders. He wants to protect consumers without driving away valuable innovation from his state. The outcome of this case will likely be appealed to higher federal courts. It could eventually reach the United States Supreme Court for a final ruling. This would be a historic moment for the intersection of law and technology. For now, xAI continues to develop Grok with an emphasis on total transparency. The company maintains that users should decide what is offensive, not the government. This philosophy is now being tested against the legal power of a sovereign state.

Corporate Sovereignty and Innovation

The struggle highlights a fundamental question about who controls the mind of the machine. Large corporations argue that their algorithms are proprietary intellectual property and personal expressions. They believe that government mandates on “fairness” are subjective and technically impossible to satisfy. From their perspective, every adjustment for one group creates a new bias for another. This leads to a cycle of endless government interference in private software engineering. These firms prefer a market-driven approach where users choose the bots they like.

Civil Rights in the Digital Age

On the other side, advocates for the Colorado law focus on human impact. They point to statistics where AI-driven hiring tools favored male candidates over female ones. In some cases, the disparity in resume screening was as high as fifteen percent. They argue that these are not just technical errors but violations of civil rights. Without the law, they fear that centuries of progress will be erased by code. For these groups, the lawsuit is an attempt to escape social accountability. They believe that even the most advanced technology must answer to the public.