Published: 10 December 2025. The English Chronicle Desk. The English Chronicle Online.

UK police forces have been criticised after lobbying to use facial recognition technology widely reported as biased against women, young people, and ethnic minorities. The technology, deployed through the police national database (PND), allows retrospective searches comparing a suspect’s image against more than 19 million custody photographs. Officials have now confirmed that the system disproportionately misidentifies Black and Asian individuals as well as women, raising serious ethical and operational concerns.

The Home Office acknowledged last week that the technology contains bias following a review conducted by the National Physical Laboratory (NPL). That study revealed the algorithm misidentifies women, Black, and Asian people at significantly higher rates than white men, prompting concerns over fairness and accountability. Documents obtained by the Guardian and Liberty Investigates indicate police leadership has known of the bias for over a year yet requested changes to maintain operational effectiveness.

In September 2024, police chiefs were formally notified of the system’s limitations. The NPL’s Home Office–commissioned report recommended raising the confidence threshold for potential matches, a measure shown to reduce biased outcomes substantially. At this setting, false positives decreased dramatically, lowering the risk of disproportionately implicating vulnerable demographic groups.

However, the NPCC’s decision to raise thresholds was overturned within a month after multiple police forces complained that the system generated fewer investigative leads. Records indicate that increasing the threshold lowered the number of potential matches from 56% to just 14%, illustrating the tension between operational convenience and fairness. The Home Office and NPCC have declined to disclose current threshold settings, though NPL findings suggest the system could still produce false positives for Black women almost 100 times more frequently than for white women under certain configurations.

Home Office statements acknowledge the risks but emphasise that human oversight remains integral to the process. “The testing identified that in a limited set of circumstances the algorithm is more likely to incorrectly include some demographic groups in its search results,” a spokesperson said, highlighting the need for trained officers to review outcomes before any action.

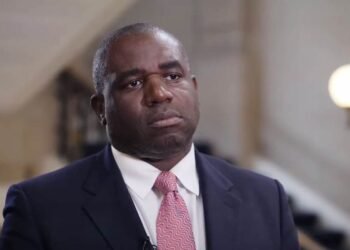

The controversy arises as the government launches a ten-week consultation on expanding facial recognition usage, with Policing Minister Sarah Jones calling the technology the “biggest breakthrough since DNA matching.” Critics argue that expansion without addressing known biases could further entrench systemic disparities in policing.

Prof Pete Fussey, a former independent reviewer of the Metropolitan Police’s facial recognition use, warned that convenience should not override fundamental rights. “This raises the question of whether facial recognition only becomes useful if users accept biases in ethnicity and gender. Convenience is a weak argument for overriding fundamental rights, and one unlikely to withstand legal scrutiny,” he said.

Independent oversight voices also highlighted concerns regarding alignment with broader race action commitments. Abimbola Johnson, chair of the independent scrutiny board for the police race action plan, noted: “There was very little discussion through race action plan meetings of the facial recognition rollout despite obvious cross-over with the plan’s concerns. These revelations show anti-racism commitments are not being translated into wider practice.” She emphasised that any deployment must meet strict national standards, demonstrate reduced bias, and undergo independent scrutiny.

In response, the Home Office has indicated that a new, independently tested algorithm with no statistically significant bias has been procured and will be tested early next year. Officials stress that the technology aims to protect the public by supporting criminal investigations while ensuring human review is applied at every step.

The case has sparked renewed debate over the balance between public safety and civil liberties, raising pressing questions about ethical use of emerging technologies. Critics argue that prioritising operational efficiency over fairness risks undermining public trust and perpetuating systemic inequalities.

As the consultation progresses, stakeholders from civil society, academia, and policing will be closely watching how decisions are made to ensure the technology does not compound existing racial disparities. This episode serves as a reminder that emerging law enforcement technologies must be evaluated with rigorous ethical standards, independent oversight, and a commitment to fairness, transparency, and accountability.

The ongoing discussion highlights a critical moment for policing in the UK, as leaders confront the challenge of integrating technological advances while safeguarding individual rights. Missteps could exacerbate longstanding social inequalities, while careful implementation may prove pivotal in achieving justice, trust, and operational effectiveness.