Published: March 10, 2026

The English Chronicle Desk

The English Chronicle Online

Artificial intelligence-generated misinformation targeting British politics has been traced to overseas “content farms,” according to a recent investigation that uncovered coordinated social media activity designed to spread fabricated stories and manipulated images of UK political figures. Technology company Meta has already removed several Facebook pages linked to Vietnam after evidence emerged that they were distributing false narratives and AI-generated content about British politicians.

The discovery highlights growing concerns about how rapidly evolving artificial intelligence tools are being used to produce misleading content that appears credible to ordinary social media users. Experts warn that such activity could undermine public trust in political institutions and distort democratic debate, particularly as elections approach in parts of the United Kingdom.

Researchers began examining the issue after identifying several Facebook pages that presented themselves as UK-based news outlets. Despite appearing to operate within Britain, platform transparency features revealed that most of the accounts were actually managed from Vietnam. These pages had accumulated thousands of followers and repeatedly posted highly similar stories about political events, many of which were either exaggerated or entirely fabricated.

Some posts combined sensational headlines with AI-generated images depicting well-known political figures in dramatic situations that never occurred. Investigators found examples portraying politicians abruptly walking out of television interviews after heated confrontations or appearing in compromising or humiliating circumstances. Although some content referenced genuine news events, much of it was demonstrably false.

Meta removed a number of these pages after being contacted by investigators. However, analysts noted that new pages appeared frequently, sometimes within days of others being taken down. This pattern suggests a coordinated effort to continuously recreate networks capable of spreading viral misinformation.

Professor Martin Innes, director of the Crime and Security Research Institute at Cardiff University, described these operations as “content farms” designed primarily to generate online engagement. According to Innes, the individuals behind such pages are often motivated by profit rather than ideology. By creating sensational or shocking posts likely to attract attention, page operators can potentially earn advertising revenue through platform monetisation programmes.

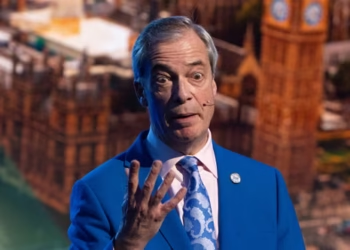

The investigation revealed that the misleading posts often featured prominent British political figures including Nigel Farage, Boris Johnson, Rishi Sunak and Prime Minister Keir Starmer. In several fabricated stories Farage was depicted adopting dogs, donating his personal fortune to charity or celebrating the birth of a child—none of which had any basis in fact. Other posts portrayed him being arrested, accompanied by artificial images showing him in handcuffs.

Starmer was also the subject of fabricated claims suggesting he had collapsed during a public appearance or was facing legal action over alleged election manipulation. Such posts were designed to resemble breaking news reports, making them more likely to be shared quickly across social media platforms.

In some cases Facebook displayed warning labels indicating that particular posts had been debunked by independent fact-checkers. One example involved a false story claiming Farage had been hospitalised, which had previously been disproven by the fact-checking organisation Full Fact. Yet researchers found nearly identical stories circulating elsewhere without warning labels attached, demonstrating the difficulty platforms face in tracking the spread of misinformation.

Investigators also observed unusual patterns in engagement statistics on the pages. Some posts attracted thousands of reactions, comments and shares, while others received almost none despite the pages having large numbers of followers. According to Professor Innes, this discrepancy may indicate the use of automated bot accounts designed to manipulate social media algorithms.

Bots can artificially inflate follower counts or generate interactions that cause platform algorithms to promote content more widely. By exploiting such systems, content farm operators can increase the visibility of their posts and potentially expose millions of users to misleading information.

While many social media users recognised the posts as fake or expressed scepticism in the comments, others appeared to believe the stories were genuine. Experts say this mixture of reactions illustrates how easily misinformation can circulate online, especially when it is presented in a format that mimics legitimate news reporting.

The issue is drawing renewed attention because regional elections in Wales and Scotland are scheduled to take place on 7 May, alongside local elections in parts of England. Authorities fear that manipulated images or videos could influence political discussions during the campaign period.

Deepfakes—digitally altered videos, audio recordings or photographs that make fabricated events appear real—have become significantly easier to produce in recent years. Previously such manipulations required specialised software and advanced technical skills, but modern text-to-image and video generation tools allow even inexperienced users to create convincing fabrications within minutes.

Despite widespread concern, research from the Alan Turing Institute previously concluded that deepfakes did not have a measurable impact on the United Kingdom’s 2024 general election. However, analysts caution that technological barriers have continued to fall, potentially increasing the influence of AI-generated misinformation in future campaigns.

In response to these concerns, the Electoral Commission has begun developing technology capable of identifying and tracking deepfake content online. The initiative is being carried out in collaboration with the Home Office and aims to help authorities detect manipulated media circulating during election campaigns.

Vijay Rangarajan, chief executive of the Electoral Commission, said the project is intended to help voters recognise misinformation and strengthen public confidence in the democratic process. The software is expected to monitor suspicious digital content and assist regulators in determining whether campaign rules have been breached.

However, some experts warn that detection systems alone may not be sufficient to prevent the spread of manipulated media. Professor Innes noted that identifying sophisticated deepfakes can be extremely time-consuming, sometimes requiring specialists to examine images or video frames for many hours before reaching a conclusion.

BBC researchers also discovered several AI-generated videos targeting Welsh politicians. Some of the clips showed public figures appearing to endorse political opponents or engage in inappropriate behaviour. One widely shared video depicted Prime Minister Keir Starmer and Welsh First Minister Eluned Morgan kissing, while another appeared to show Plaid Cymru leader Rhun ap Iorwerth enthusiastically praising the Reform party.

The creators of one Facebook page responsible for such videos insisted that the material was intended as satire. They argued that viewers should recognise the clips as jokes and pointed out that captions sometimes indicated the content was not real. Nevertheless, critics argue that even satirical deepfakes can be misleading when they circulate without clear context.

Other manipulated videos were less obviously humorous. One clip shared by an anti-Reform page appeared to show Nigel Farage delivering a speech against having separate parliaments and sports teams within the United Kingdom. No record exists of him ever making such remarks, and visual distortions suggested the video had been generated using artificial intelligence tools.

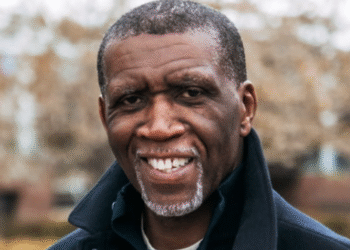

Politicians who have been targeted by deepfakes say the experience can be deeply disturbing. Labour MP Alex Davies-Jones revealed that she had been subjected to explicit manipulated images portraying her in degrading situations. She said speaking publicly about such attacks could be embarrassing but warned that ignoring them would allow the problem to grow unchecked.

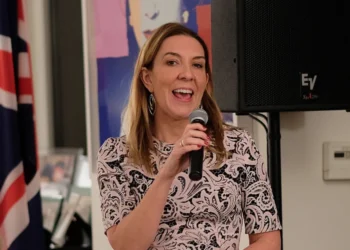

Other political figures expressed similar concerns. Conservative Member of the Senedd Janet Finch-Saunders said she felt physically ill when she discovered an explicit deepfake image of herself circulating online. She warned that less technologically savvy members of the public might struggle to recognise fabricated content.

Campaigners and political leaders across the ideological spectrum are now calling for stronger regulation of artificial intelligence technologies used to create deceptive media. Critics argue that without clear safeguards, the rapid spread of deepfakes could erode trust in democratic institutions and create confusion among voters.

Government officials say the risks associated with deepfake technology are widely recognised. The Department for Science, Innovation and Technology has stated that social media companies must take greater responsibility for removing fraudulent or illegal content from their platforms. Under the United Kingdom’s Online Safety Act, companies that fail to address such material could face regulatory enforcement measures.

Although the full impact of AI-generated misinformation remains uncertain, experts agree that the combination of powerful generative tools and global social media networks has created new challenges for democratic societies. As elections approach, authorities and technology companies face increasing pressure to ensure that voters can distinguish genuine information from sophisticated digital fabrications.