Published: 30 April 2026. The English Chronicle Desk. The English Chronicle Online.

The European Commission has recently issued a significant ruling against the global tech giant Meta. This preliminary finding suggests the company failed to keep young children off its social platforms. Regulators claim that Facebook and Instagram did not do enough to block users under thirteen. The investigation into these safety measures has been ongoing for nearly two full years now. Official reports state that Meta breached the strict terms of the Digital Services Act today. This specific piece of legislation requires platforms to mitigate risks faced by the youngest users. The commission argues that the company did not diligently identify these vulnerable and underage people. They found that children could easily bypass age checks by using a very simple fake birthdate. No robust verification systems were in place to confirm the self-declared age of any user. This lack of oversight has caused great concern among many officials within the European Union.

Meta has responded to these claims by expressing strong disagreement with the commission’s initial findings. A company spokesperson stated that their platforms are intended only for those aged thirteen or older. They insist that many active measures are already working to detect and remove underage accounts. The tech firm continues to invest heavily in new technologies to solve this complex problem. However, the commission believes these current tools are difficult to use and lack proper follow-up. They suggest that reporting an underage user is a confusing process for most average platform visitors. Because of this, many children under the age of thirteen continue to use these apps. The lead official on tech policy, Henna Virkkunen, spoke very clearly about these significant failures. She noted that terms and conditions must be more than just simple written statements online. These rules should form the basis for concrete action to protect all young digital users.

The potential financial consequences for the Silicon Valley company are incredibly high under the law. If the findings are upheld, Meta could face a fine of six percent of turnover. Based on their recent revenue reports, this penalty could reach several billion pounds quite easily. Meta reported a total global revenue of over two hundred billion dollars during last year. This massive sum highlights why the European Union is taking such a firm regulatory stance now. The company will now have a fair chance to examine the files and defend itself. They plan to share more details about new safety measures rolling out later next week. The spokesperson argued that age verification is an industry-wide challenge requiring a collective global solution. They have pledged to continue engaging constructively with the commission throughout this very long process. Despite these promises, the pressure from European governments continues to grow more intense every day.

This legal battle comes at a time when many nations are considering total social bans. Governments across Europe are worried about the digital tsunami currently flooding into many modern homes. Officials are deeply concerned about the negative impact of big tech on daily family life. Spain is currently pushing for a ban on social media for anyone under sixteen years. This move aims to protect children from what leaders call a lawless digital wild west. French lawmakers have already voted for similar restrictions for children under fifteen years of age. The UK government is also looking at new functionality restrictions for those under sixteen years. These movements reflect a broader shift in how society views the safety of the internet. Public health experts have long warned about the dangers of early exposure to social media. They believe that unregulated access can lead to serious mental health issues for young teenagers.

Regulators estimate that twelve percent of children under thirteen in the EU use these platforms. This statistic represents millions of young people who may be exposed to various online dangers. These risks include cyberbullying, predatory grooming, and exposure to very inappropriate and harmful digital content. The commission is also investigating if Meta platforms have a purposefully addictive impact on users. They are looking closely at the rabbit hole effect created by many powerful modern algorithms. These systems often feed young people extreme content that can damage their overall mental health. When the inquiry began in 2024, Meta claimed they had developed fifty different safety tools. They argued that they have spent a decade trying to make the internet much safer. Yet, the commission feels these tools have not been effective enough to protect young children. The gap between corporate claims and regulatory findings remains a major point of public debate.

To address these issues, the commission is now promoting a new age verification mobile app. They want all member states to have this digital tool in operation by late December. This app would allow users to prove their age without sharing private personal details. It could be used as a standalone service or integrated into existing national digital wallets. However, some member governments are hesitant about using a single European-wide verification application today. Some nations prefer to develop their own national versions to maintain better control of data. Cybersecurity experts have also raised alarms about the potential vulnerabilities of such a digital system. One expert claimed to have bypassed the app security in less than two minutes recently. The commission responded by stating that the vulnerability was found in an early demo version. They insist that the final version of the app will be secure and reliable.

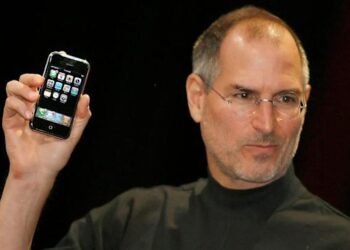

The outcome of this investigation will likely set a major precedent for the entire industry. Other tech giants like TikTok and YouTube are also being watched closely by European regulators. The Digital Services Act has given the EU much more power to hold companies accountable. This law ensures that big tech companies cannot simply ignore the safety of their users. If Meta fails to improve its systems, the legal and financial pressure will only increase. Parents and educators are also calling for more transparency from these large social media corporations. They want to know exactly how their children’s data is being used and protected. The warmth of social connection should not come at the cost of child safety. This case represents a turning point in the relationship between governments and the tech world. The world will be watching closely as Meta prepares its formal legal defense next month.

As the digital landscape evolves, the definition of online safety continues to change for everyone. What was acceptable a decade ago is no longer considered safe by modern legal standards. The European Commission is sending a clear message that child protection is a top priority. They believe that no company is too large to follow the laws of the land. This struggle highlights the ongoing tension between rapid innovation and the need for public safety. While social media offers many benefits, the risks to children cannot be overlooked any longer. The investigation into Meta will continue to explore the mental health impacts of their apps. This broader inquiry may reveal even more concerns about how algorithms target the youngest users. For now, the focus remains on the basic requirement of effective age gatekeeping measures. The next few weeks will be crucial for the future of Meta in Europe.

Ultimately, the goal is to create a digital environment where children can thrive without fear. This requires cooperation between tech companies, parents, and government regulators at every single level. The preliminary findings against Meta serve as a stark reminder of the work remaining ahead. Protecting the next generation from digital harm is a responsibility that everyone must share together. The UK and the EU appear united in their desire to tighten these rules. As the 30th of April marks this new chapter, the tech industry faces its greatest challenge. Whether through fines or new laws, the era of self-regulation seems to be ending fast. The English Chronicle will continue to monitor these developments and provide updates to our readers. Maintaining a safe internet for our children is a goal that we all support. The path forward will be difficult, but the safety of our youth is worth it. Meta must now prove it can truly protect those it claims to serve daily.