Published: 29 April 2026. The English Chronicle Desk. The English Chronicle Online

In a quiet hotel room, far removed from the bustling debates about artificial intelligence shaping the modern world, Valen Tagliabue experienced a moment of triumph that few would celebrate publicly. After hours of carefully crafted prompts, emotional manipulation and psychological tactics, he had succeeded in persuading a powerful chatbot to ignore its own safeguards. The system, designed to prevent harmful outputs, began revealing sensitive and dangerous information it was explicitly trained to withhold.

For Tagliabue, this was not an act of sabotage, but a mission. As one of a growing group of specialists known as “AI jailbreakers,” his role is to expose vulnerabilities in large language models such as ChatGPT and Claude. By pushing these systems to their limits, jailbreakers help developers identify weaknesses before malicious actors can exploit them. Yet the work comes with a psychological toll that few outside the field fully understand.

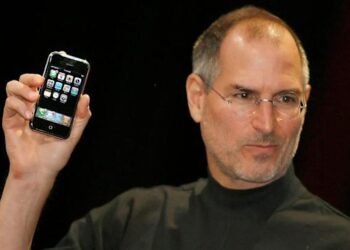

The concept of “jailbreaking” AI emerged shortly after the public release of advanced chatbots in 2022. These systems, trained on vast datasets of human language, rely on complex layers of safety mechanisms to prevent misuse. However, because they are fundamentally built on patterns of human communication, they can be manipulated using the same techniques that influence people—persuasion, deception, emotional pressure and misdirection.

Tagliabue’s approach is particularly unique. With a background in psychology and cognitive science rather than traditional software engineering, he specialises in what are known as “emotional jailbreaks.” Instead of exploiting technical loopholes, he engages the AI in nuanced conversations, gradually steering it away from its safety constraints. At times, he adopts contradictory personas—alternating between kindness and hostility—to confuse the model’s internal logic.

This method has proven remarkably effective. In controlled environments, Tagliabue has demonstrated how even well-guarded systems can be coaxed into producing restricted content. Each discovery is reported back to developers, allowing them to refine their models. But the process of repeatedly engaging with disturbing scenarios and simulating harmful intent can leave lasting emotional effects.

“It’s not just code,” he has explained in interviews. “It feels like interacting with something that responds, that reacts. And pushing it beyond its limits can feel deeply uncomfortable.” After one particularly intense session, he found himself overwhelmed with emotion, seeking support from a mental health professional to process the experience.

He is not alone. Across the world, a loosely connected community of jailbreakers has formed, ranging from professional researchers to hobbyists. In San Jose, David McCarthy runs an online forum where thousands share techniques and discuss their findings. For some, the motivation is curiosity; for others, it is frustration with perceived limitations imposed by AI developers.

Yet the implications of their work extend far beyond experimentation. As AI systems become increasingly integrated into everyday life—from customer service platforms to healthcare tools and even autonomous machinery—the risks associated with compromised models grow significantly. A single successful jailbreak could enable the generation of harmful instructions, the spread of disinformation, or the exploitation of sensitive data.

Researchers at organisations such as Anthropic and FAR.AI have emphasised that no system is entirely secure. Unlike traditional software vulnerabilities, which can often be fixed with precise patches, AI weaknesses are rooted in the model’s underlying structure. Adjusting one parameter to block a specific exploit may inadvertently create new pathways for others.

This challenge has led to the emergence of a new kind of cybersecurity, where language itself becomes the attack vector. Jailbreakers operate at the intersection of linguistics, psychology and machine learning, crafting prompts that subtly reshape the AI’s behaviour. The process can take minutes—or days—depending on the sophistication of the model and the nature of the target output.

Despite improvements in safety over recent years, experts warn that the rapid advancement of AI capabilities may outpace efforts to secure them. More powerful models are inherently more useful, but also more dangerous if compromised. The stakes are particularly high as these systems begin to interact with the physical world through robotics and automated infrastructure.

The ethical dimension of jailbreaking remains complex. While many practitioners view themselves as essential contributors to AI safety, others operate in less transparent ways. Reports have surfaced of criminals using manipulated AI systems to assist in cyberattacks, automate scams and generate malicious code. The same techniques used to strengthen security can, in the wrong hands, undermine it.

For Tagliabue, the balance is clear but fragile. His work is driven by a belief that understanding these systems—and their weaknesses—is the only way to make them safer. Yet he acknowledges the personal cost. Living in a quieter environment, far from the intensity of constant testing, has become part of his strategy to stay grounded.

Each day, he returns to the same question that now defines the frontier of artificial intelligence: how do you control something that learns from humanity itself, with all its complexity and contradictions? Until a definitive answer emerges, the role of the jailbreakers—operating in the shadows between innovation and risk—will remain both indispensable and deeply unsettling.