Published: March 5, 2026

The English Chronicle Desk

The English Chronicle Online

The UK’s data protection watchdog has formally contacted Meta Platforms after emerging reports that subcontracted workers were able to view highly sensitive and private material recorded by the company’s AI‑powered smart glasses, raising fresh concerns about consumer privacy and data governance in the era of wearable artificial intelligence.

The Information Commissioner’s Office (ICO) said it would write to Meta following an investigation by Swedish newspapers that found workers at a Nairobi‑based outsourcing firm, employed to help train Meta’s AI systems, sometimes reviewed intimate clips captured by users’ Ray‑Ban and Oakley‑branded smart glasses. The footage reportedly included recordings of people in private situations — including using the toilet, undressing and sexual activity — as well as sensitive visible information such as bank card numbers, according to interviews with the annotators and media reports.

Meta has acknowledged that human review of user‑generated media captured by its smart glasses occurs as part of the data‑labelling process described in its terms of service and privacy policy, which disclose that content shared with Meta AI may be subject to automated and manual inspection. The company said it applies filtering to “protect people’s privacy” and is continually refining its safeguards. But workers and privacy advocates say current measures sometimes fail to sufficiently anonymise imagery or prevent sensitive material from being seen.

The ICO’s letter to Meta emphasised that devices processing personal data should put users in control and provide clear transparency about what data is collected, how it is used and to whom it is disclosed, including when material may be reviewed by humans. The regulatory push reflects broader scrutiny in both the UK and the European Union over how companies comply with high standards of data protection — especially when data is transferred across borders for processing.

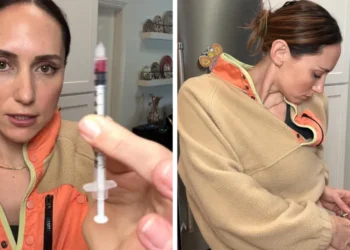

The issues uncovered in the Swedish investigation stem from the way Meta’s smart glasses function: when users activate recording or use AI features that capture audio or video, that media can be processed on Meta’s servers and, in some cases, forwarded to subcontracted data annotators to label and teach AI systems how to interpret real‑world content. Some workers reported seeing not only ordinary scenes like living rooms but also footage of intimate moments that the person wearing the glasses may not have realised was being recorded or sent for review.

Privacy experts have noted that clearer consent mechanisms and stricter data minimisation practices are needed when dealing with wearable devices that capture real‑world environments and personal interactions. Without stronger protections, regulators warn that users may unknowingly expose their most private moments — and that companies risk running afoul of data protection laws designed to protect individuals’ rights in an era of pervasive digital surveillance.