Published: 28 September ‘2025. the English Chronicle Desk

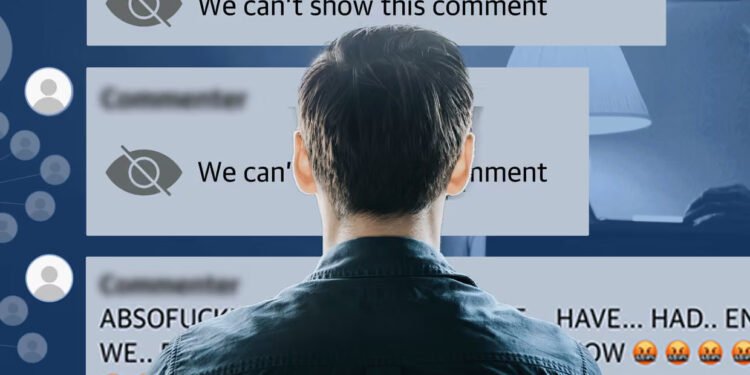

A recent investigation into far-right networks on Facebook has revealed that these online communities are acting as significant drivers of radicalisation in the United Kingdom, exposing hundreds of thousands of users to extremist narratives and racist misinformation. The Guardian-led probe, which analysed months of data, suggests that otherwise ordinary members of the public, many of them older adults and retirees, are running these groups, creating spaces where far-right rhetoric flourishes largely unchecked.

These Facebook communities, often presented as political discussion forums, have become hotbeds of anti-immigration and racially charged content. Experts who reviewed the data warned that such environments have the potential to push individuals toward extreme views, increasing the likelihood of actions ranging from online harassment to real-world violence. The findings come in the wake of a massive far-right protest in London, which drew over 150,000 participants from across the country, significantly surpassing police estimates and alarming politicians due to its size and intensity.

The Guardian’s investigative team identified the groups by tracing the online activity of participants involved in riots following the tragic deaths of three girls in Southport last summer. From this data, a broader ecosystem of interconnected far-right communities emerged. Within these groups, mainstream politicians were routinely described with terms such as “treacherous,” “traitors,” and “scum,” while institutions like the police and courts were accused of enacting a “two-tier” system of justice. Even charitable organisations such as the RNLI were disparaged and mocked as a “taxi service,” highlighting the pervasive mistrust and antagonism that characterises these online spaces.

Over 51,000 posts from three of the largest public groups in the network were analysed, revealing hundreds of concerning examples of misinformation, conspiracy theories, racist slurs, and content promoting white nativism. Far-right tropes were commonly recycled across multiple groups, often word-for-word or with minor variations, demonstrating the efficiency with which extremist narratives are spread within the network. This ecosystem of radicalisation is maintained by administrators—predominantly middle-aged and older Facebook users—who manage invitations, moderate discussions, and selectively amplify content, ensuring the messages reach broader audiences across related groups.

The geographic distribution of these administrators spans England and Wales, with concentrations in the south-east and Midlands. Their backgrounds are diverse, encompassing different socio-economic statuses and living situations, from large seaside townhouses on the south coast to modest council homes in urban centres like Birmingham. Despite these differences, the common thread among administrators is their active engagement in curating content that sustains far-right ideology online.

When approached for comment, most administrators declined to speak on the record. One, a resident of a Leicestershire village who moderates six groups with a combined membership approaching 400,000, claimed that far-right users who breached group rules were “deleted and blocked.” However, the investigation found abundant evidence contradicting these claims, including numerous instances of extreme posts containing well-known debunked conspiracy theories and xenophobic content.

Immigrant communities, particularly Muslims, were frequent targets of vitriolic language, ranging from dehumanising labels such as “parasites,” “criminals,” and “primitive,” to broader cultural attacks, accusing them of being “incompatible with the UK way of life.” Some posts employed particularly inflammatory imagery, describing the need for a “humongous nit comb” to remove people deemed undesirable, reflecting the intensity of prejudice being normalised within these online circles. Other messages warned of societal decay, claiming that towns and villages were being “filled” with people bringing crime and “third-world culture,” illustrating the narratives of fear and division propagated within these groups.

Experts emphasised that these online forums are particularly potent because they combine algorithmic amplification with the trust users place in content from peers or community leaders. Dr Julia Ebner, a radicalisation researcher at the Institute for Strategic Dialogue, noted that these spaces act as breeding grounds for extremist ideologies and play a significant role in the radicalisation of individuals. “The online spaces amplify dynamics of hate and conspiracy at unprecedented speed,” she said. “With deepfakes, fabricated videos, and bot automation, people are often more likely to trust content shared by an individual account than official sources, which is inherently dangerous.”

Historically, far-right online movements had found a home on platforms such as 4chan, Parler, and Telegram, which often catered to younger, more tech-savvy audiences. The emergence of far-right networks on Facebook, however, demonstrates a shift toward older demographics and mainstream social media platforms, broadening the reach and influence of extremist narratives. Many administrators and moderators of these groups are over 60, representing a cohort that is often more socially integrated and influential in offline communities.

Despite the pervasive spread of far-right content, Meta, the parent company of Facebook, reviewed the three groups analysed in the Guardian investigation and concluded that the material did not violate its current hateful conduct policies. This raises broader questions about the effectiveness of content moderation on large social media platforms and highlights the difficulty of policing ideologically driven speech that operates at scale.

The Guardian’s investigation recorded the combined membership of the network at 611,289 individuals as of 29 July 2025, though this figure likely includes double counting since many users belong to multiple groups. The scale of these networks underscores the potential for radicalisation to reach significant numbers of people, demonstrating that far-right extremism is not limited to marginalised online spaces but has infiltrated mainstream digital platforms where it can reach older, otherwise socially conventional users.

Researchers warn that the combination of algorithmic amplification, peer reinforcement, and repeated exposure to extremist content creates a potent environment for radicalisation. Online hate can shift from rhetoric to real-world action, as evidenced by the riots and protests linked to far-right mobilisation in the UK over the past year. The persistence of these groups, coupled with limited effective oversight, allows extremist narratives to spread rapidly, reaching audiences who might not otherwise encounter such content.

Ultimately, this investigation highlights the urgent need for a multi-pronged response, including improved content moderation, public awareness campaigns, and research-driven strategies to counteract the appeal of online far-right ideologies. The UK’s law enforcement, policymakers, and social media platforms are now facing increasing pressure to address the systemic issues that allow radicalisation to proliferate in seemingly ordinary online communities. While Facebook remains a platform of global connectivity, the rise of far-right networks within it illustrates how social media can also serve as a conduit for misinformation, hate, and extremist mobilisation.

The findings also reinforce the critical role of independent investigative journalism in uncovering these dynamics. By tracing the links between online activity and offline mobilisation, investigations like the Guardian’s illuminate the hidden mechanisms through which extremist networks operate, exposing both the reach of radical narratives and the urgent need for policy responses that address the digital ecosystem’s role in shaping political and social behaviour.

As far-right groups continue to grow and adapt online, the challenge for regulators and society at large will be to balance freedom of speech with the imperative to prevent the radicalisation of vulnerable individuals. This investigation provides a sobering reminder of the scale, sophistication, and societal impact of far-right networks operating under the guise of ordinary social media communities.