Published: 09 January 2026. The English Chronicle Desk. The English Chronicle Online.

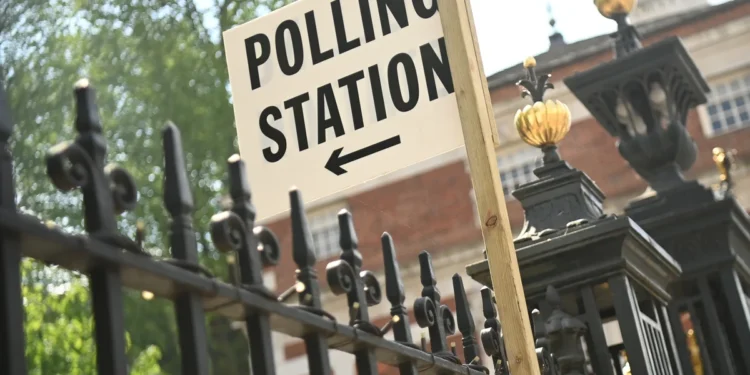

As election campaigns approach in Scotland and Wales, concerns about election deepfakes have moved from theory to urgent preparation. Officials responsible for safeguarding democratic integrity are accelerating work on new technology designed to detect manipulated videos and images before they mislead voters. The initiative reflects growing global anxiety that artificial intelligence could undermine trust during elections, even where no confirmed domestic cases have yet emerged.

The pilot project, being developed jointly by the Electoral Commission and the Home Office, aims to identify election deepfakes targeting candidates in the devolved elections scheduled for later this year. Officials involved say the software should be operational before formal campaigning begins in late March. The intention is to provide early warning, allowing swift action if fabricated content circulates online and threatens to distort public debate.

Sarah Mackie, chief executive of the Electoral Commission in Scotland, has described the work as a rapid response to evolving risks. She has emphasised that while the technology can flag suspicious content, it cannot deliver absolute certainty in every case. When the system identifies a likely deepfake, officials plan to contact police, inform the affected candidate, and alert the public promptly. They will also approach social media companies to request the removal of the material, although current arrangements rely largely on voluntary cooperation.

The limitations of voluntary takedowns have become a central concern. Mackie has argued that election administrators need legally enforceable powers compelling platforms to remove proven hoax material. Without such authority, responses may be delayed or inconsistent, allowing election deepfakes to gain traction before corrective action occurs. The commission has urged the UK government to consider introducing formal takedown powers to close what it sees as a regulatory gap.

Although Britain has not yet experienced a confirmed case of deepfake material influencing an election campaign, international evidence shows a sharp rise elsewhere. In recent contests abroad, manipulated videos have portrayed candidates making statements they never uttered, sometimes spreading faster than official corrections. The spread of free and accessible AI image and video tools has lowered technical barriers, increasing the likelihood that similar tactics could appear in UK elections.

British democratic processes have already faced persistent interference through coordinated online activity. State-linked networks associated with countries including Russia, Iran, and North Korea have previously targeted UK elections and referendums. These campaigns typically rely on fake accounts designed to amplify division or undermine confidence in institutions. The emergence of election deepfakes adds a new and potentially more persuasive layer to those established tactics.

At a pre-election briefing in Edinburgh, Mackie also highlighted broader work focused on candidate safety and confidence. The commission is collaborating with the Scottish Parliament and Police Scotland on measures to support women and candidates from black, Asian, and minority ethnic backgrounds. Research conducted in 2022 revealed that around half of female candidates experienced abuse during campaigns, with many reconsidering future participation as a result. Minority ethnic candidates reported similar fears, raising concerns about long-term impacts on political diversity.

The growing sophistication of AI-driven abuse compounds these challenges. Mackie warned that pornographic manipulation tools, sometimes described as digital “undressing” technologies, could be used maliciously during campaigns. If such content appeared in an electoral context, it would be treated as a serious offence and referred to police. Platforms linked to these tools, including those associated with Elon Musk’s X ecosystem and its Grok AI service, have faced criticism for slow responses to harmful content.

Senior politicians at Westminster have called for stronger regulatory action, arguing that existing safeguards have not kept pace with technological change. Ofcom, the UK media regulator, has also been urged to take a more assertive stance. However, Mackie acknowledged that the Electoral Commission does not currently have a clearly defined legal role in regulating election deepfakes directly. The pilot project is therefore intended as a learning exercise, testing practical responses within existing powers.

If successful, the initiative could be expanded to cover all future UK elections. Officials hope the data gathered will inform policymakers, regulators, and technology companies about effective interventions. Mackie has described the approach as stepping into an unregulated space, recognising that while campaigning rules exist around the edges, the centre remains exposed to emerging digital threats.

The Home Office has framed the project as part of a broader commitment to election security. A spokesperson pointed to the Online Safety Act, which requires platforms to remove unlawful content and limit the spread of material causing psychological or physical harm. Protecting elections from sophisticated manipulation, the department says, is essential to maintaining public trust. The pilot, they argue, will strengthen the UK’s ability to detect and counter election deepfakes before damage occurs.

Public confidence remains the central concern. Democratic systems rely on shared acceptance of reality, particularly during elections. Even a small number of convincing forgeries can seed doubt, forcing candidates and officials into reactive positions. By the time corrections are issued, narratives may already be entrenched. This asymmetry explains why early detection is seen as vital, even if the technology is imperfect.

The Scottish and Welsh elections will therefore serve as a testing ground. They offer a controlled environment to assess how detection software performs under real campaign conditions. Officials will monitor accuracy rates, response times, and cooperation from social media companies. They will also evaluate how the public reacts when informed that suspicious content has been identified as likely fabricated.

Ultimately, the debate over election deepfakes extends beyond technical solutions. It raises questions about responsibility, regulation, and resilience. While software can flag anomalies, broader media literacy and transparent communication remain essential. Voters must understand that manipulated content exists and that institutions are actively working to counter it.

As campaigning begins later this spring, the effectiveness of this pilot will be closely watched. Its success or failure may shape future legislation and define how the UK confronts one of the most complex challenges facing modern democracy. In an era where images and videos can no longer be taken at face value, protecting electoral integrity requires both innovation and vigilance, ensuring that trust, once lost, does not become impossible to restore.