Published: 10 March 2026. The English Chronicle Desk. The English Chronicle Online.

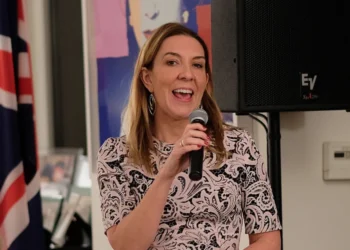

Ministers must urgently respond to the rising threat of deepfakes, technology secretary Liz Kendall said.

Kendall explained that the rapid pace of technological development is now far outstripping government regulation capacity. She warned that women and girls face particular risks from online abuse and artificial content. The technology secretary suggested introducing regular annual reviews of digital safety laws, similar to procedures for the finance bill.

Speaking to the Guardian, Kendall outlined her concerns following a roundtable with leading tech companies including Meta, Snapchat, Reddit, Match Group, Google, TikTok, and OnlyFans. She urged these platforms to take stronger action against misogyny and harmful online material, highlighting that previous legislation has been too slow to respond to evolving threats.

“It took eight years for the Online Safety Act to come into effect, and technology has advanced too quickly to match that pace,” Kendall said. She emphasised that relying on infrequent legislation leaves women and children vulnerable to exploitation. “We must explore ways to review regulations much more quickly to keep pace with these developments,” she added.

Kendall recently launched a consultation into banning social media use for under-16s, which is expected to report its findings this summer. She indicated that the government could introduce new laws after the consultation concludes, potentially through secondary legislation, which limits opportunities for MPs to amend proposals.

Campaigners supporting a social media age restriction believe that Keir Starmer is likely to endorse such measures, but have expressed concern that a weak ban may be implemented without meaningful parliamentary input. Kendall acknowledged these worries, noting that while MPs would vote on secondary legislation, amendments may not be permitted, which could reduce the strength of protections for children.

In addition to age restrictions, Kendall has announced plans to bring AI chatbots under the remit of the Online Safety Act. This change would hold companies accountable for content generated by AI tools, extending liability beyond material posted by human users. The move follows controversy surrounding Elon Musk’s platform X, whose Grok chatbot allowed users to produce sexualised images of real people before UK authorities intervened.

Kendall described the government’s response to the Grok incident as firm and principled. “The public rightly expects government action to keep children safe and prevent women from being exploited online,” she said. “Grok began spreading harmful images, and we acted decisively to uphold the law and our values. The platform ultimately complied after pressure from the government.”

She added that the incident demonstrates the government’s determination to confront harmful content aggressively. “I hope our actions regarding Grok show the unwavering commitment of the prime minister and myself to protect women and girls online,” she said. Kendall stressed that technological threats require both rapid legislative action and cooperation from industry leaders to be effective.

The upcoming parliamentary debate on children’s online safety is expected to scrutinise whether social media access should be limited for under-16s. Members of the Commons science, innovation, and technology committee will hear testimony from Australia’s eSafety commissioner, health campaigners, and parent groups, with a focus on practical solutions to prevent online harm. The debate will likely influence the shape of future legislation, particularly regarding age restrictions and platform accountability for AI-generated content.

Kendall highlighted that technology regulation cannot be treated as static legislation updated once every few years. She pointed to the pace of AI development, deepfake creation, and social media evolution as evidence that the government must adopt a more agile approach. Annual reviews, she suggested, could help regulators respond promptly to new risks and protect vulnerable populations more effectively.

The technology secretary also stressed that companies bear a significant responsibility to combat misogyny and harmful content. During the roundtable with major platforms, Kendall urged firms to implement more robust moderation, enforce stricter community guidelines, and invest in AI detection tools. She argued that the private sector has a crucial role in preventing abuse, but government oversight remains essential to ensure compliance.

In her remarks, Kendall described online misogyny and the misuse of deepfakes as a “fast-moving threat” that can inflict serious harm on young women and girls. She warned that without timely intervention, such content could spread rapidly, evade current legal protections, and become increasingly difficult to remove. The proposed measures aim to close gaps in existing law, ensuring that both children and adults receive adequate protection from emerging technological risks.

Campaign groups have welcomed Kendall’s commitment but caution that enforcement and parliamentary scrutiny will be critical. They argue that secondary legislation, while expedient, must be accompanied by rigorous oversight to prevent weak or ineffective protections. The consultation into under-16 social media use represents a key opportunity for public input, but advocates insist that ministers must ensure that children’s safety is prioritised above industry convenience.

Kendall concluded by emphasising the importance of international cooperation in regulating technology. She noted that online abuse, deepfakes, and AI-generated content often cross national borders, necessitating collaboration between governments, tech companies, and regulatory bodies worldwide. By sharing best practices, pooling resources, and harmonising standards, she believes that harmful content can be more effectively mitigated, reducing the risk of exploitation for vulnerable groups.

Overall, Kendall’s comments signal a shift toward proactive technology regulation, aiming to prevent harm before it escalates. She underscored that protecting women and children online is a moral and legal imperative, and that both the government and industry must act decisively. Her proposals for annual reviews, social media restrictions for minors, and AI accountability represent a comprehensive approach to tackling the deepfake crisis.

As debate continues in Parliament and consultations progress, the government faces mounting pressure to deliver swift, robust measures. Kendall’s focus on technological agility reflects an awareness that digital threats evolve quickly, requiring legislation that is adaptable, enforceable, and responsive to emerging risks. By foregrounding children’s safety, AI regulation, and platform accountability, the government aims to create a safer online environment for all users.

The consultation on under-16s’ social media use, along with measures against AI-generated harmful content, may serve as a template for future digital safety policy. Kendall’s insistence on stronger corporate responsibility and faster legislative action highlights a new era of technology governance, where protecting vulnerable populations is central to policymaking. If adopted effectively, these measures could significantly reduce the prevalence of deepfake misuse and online abuse in the UK.

The government’s approach will be closely watched by campaigners, parents, and tech companies, all of whom have a stake in ensuring meaningful protection. Kendall’s remarks demonstrate an urgent recognition that outdated frameworks cannot keep pace with modern threats, and that proactive, evidence-based regulation is essential. By tackling deepfakes, online misogyny, and AI content responsibly, the UK can establish a model for digital safety internationally.