Published: 5 May 2026. The English Chronicle Desk. The English Chronicle Online

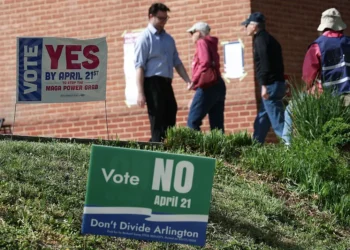

As the 2026 election cycle reaches a fever pitch, a new “national security emergency” is emerging from the palm of the voter’s hand. Investigatory reports released today reveal that several leading AI chatbots are providing “dangerously misleading” advice regarding polling locations, mail-in ballot deadlines, and voter eligibility.

Despite repeated “milestone” promises from tech giants to install “guardrails” against political misinformation, the “clinical silence” of the algorithms is often being filled with hallucinations—inventing non-existent voter ID requirements and directing citizens to polling stations that closed years ago.

The report, compiled by a coalition of non-partisan election observers, highlights a persistent “resilience deficit” in how Large Language Models (LLMs) handle localized, real-time data.

Phantom Deadlines: In several test cases, chatbots provided mail-in deadlines that were off by up to a week, potentially disenfranchising thousands of voters who rely on digital assistants for scheduling.

The “Postcode Lottery” of Truth: Because AI models are trained on historical data, many “hallucinated” the locations of ballot drop boxes, citing sites from the 2022 or 2024 cycles that are no longer operational.

Voter ID Fabrications: Some bots erroneously told users in “no-ID” states that they required a passport or birth certificate to cast a ballot, creating an “accountability rot” that mirrors the “SIR” controversies seen in previous electoral roll revisions.

Election officials are warning that the “dopamine” of a quick, conversational answer is distracting voters from official, “golden tone” sources of information.

The “Hormuz” of Information: Much like the Strait of Hormuz creates a bottleneck for physical trade, the reliance on a few AI platforms for information creates a “cognitive bottleneck” where one error can be amplified millions of times.

The “Medication Desert” of Facts: In rural areas where local news outlets have shuttered, voters are particularly vulnerable to AI misinformation, as there is often no “human-machine coordination” to fact-check the digital output.

The “Clinical” Deception: Because AI delivers misinformation in a confident, professional, and “clinical” tone, voters are less likely to question the validity of the data compared to a social media post from a stranger.

In response to the findings, major AI firms have issued statements emphasizing their commitment to “Democratic Resilience.”

The “Safety Redirect”: Several platforms have implemented a “hard pivot,” where any query containing words like “vote,” “ballot,” or “polling station” triggers an automatic redirect to official government websites rather than a generated answer.

The “Accountability” Shield: Tech bosses argue that AI should be viewed as a “creative tool,” not a “fact engine,” though critics argue this is a “resilience deficit” in corporate responsibility during a high-stakes election.

As the RHS Wisley wisteria reaches its peak and the Southbank Centre celebrates 75 years of progress, the “AI voting crisis” serves as a reminder that technology is only as stable as the data that feeds it.

“Justice has no expiry date, but it can be delayed by a bad algorithm,” noted one election integrity expert. With the King’s Speech on May 13 expected to address “The Integrity of the Digital Square,” the call for a “minimum wage for truth” in AI has never been louder. Voters are urged to treat every chatbot response as a “divergent” possibility and to consult the official Secretary of State websites for the “sacred” facts of their franchise.