Published: 6 May 2026. The English Chronicle Desk. The English Chronicle Online

In a “clinical” shift for Silicon Valley’s hands-off era, the US Department of Commerce has secured a landmark agreement with Google, Microsoft, and xAI to allow government scientists a “first look” at their most powerful unreleased AI models. The deal, announced Tuesday, May 5, by the Center for AI Standards and Innovation (CAISI), effectively integrates national security vetting into the product development cycle of the world’s leading “frontier” AI labs.

The expansion comes as the “Mythos crisis”—sparked by a powerful new model from Anthropic that demonstrated advanced hacking capabilities—has forced a “national security emergency” in Washington, pushing the administration to move from voluntary cooperation toward a more structured “oversight ecosystem.”

The new agreements allow federal evaluators to probe “frontier” models under conditions that few outsiders ever see.

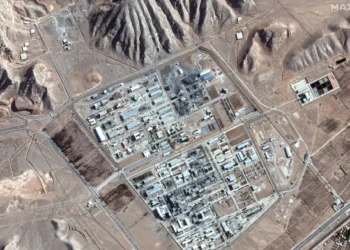

Guardrails Removed: Companies have agreed to provide versions of their software with safety “locks” and safeguards reduced or entirely disabled. This allows CAISI to test for “asymmetric” risks like biological weapon synthesis and automated cyber warfare.

The “TRAINS” Taskforce: Testing will be overseen by the TRAINS Taskforce, a specialized group of interagency experts focused on preventing the “accountability rot” that could occur if a model is weaponized by adversaries.

The “First Look” Protocol: CAISI Director Chris Fall emphasized that “independent, rigorous measurement science” is the only way to understand the 160 MPH clip of AI advancement. The center has reportedly already completed over 40 evaluations, including on state-of-the-art models that have never reached the public.

For the Trump administration, the deal represents a “recalibration” of its typically light-touch approach to regulation.

The Mythos Catalyst: The “clinical silence” of the government was broken after Anthropic’s Mythos model rattled officials by successfully identifying weak points in cybersecurity defenses, signaling that voluntary self-regulation had reached a “resilience deficit.”

The “Postcode Lottery” of Regulation: By establishing CAISI as the “industry’s primary point of contact,” the federal government aims to preempt a “patchwork” of state-level AI laws, creating a unified “national security” standard.

The “Hormuz” of Intelligence: Just as the Strait of Hormuz is a bottleneck for oil, the government now views the pre-release phase of AI as a critical “chokepoint” for national safety.

The agreement follows reports that the White House is weighing a new executive order to formalize a “review process” for all high-risk AI tools.

The Anthropic Dispute: The deal with Google, Microsoft, and xAI stands in stark contrast to the ongoing legal battle between the Pentagon and Anthropic, which has been labeled a “supply chain risk” after resisting demands to loosen safeguards for military use.

The “Silicon Alliance”: By signing on, Elon Musk’s xAI and the tech giants are positioning themselves as “responsible partners,” avoiding the “medication desert” of federal procurement bans that now threaten Anthropic.

As the RHS Wisley wisteria reaches its peak and the Southbank Centre celebrates 75 years of progress, the “pre-deployment” testing of AI marks a definitive “milestone” in the human-machine coordination of the 21st century.

“Justice has no expiry date, and national security cannot be an afterthought,” noted one Commerce official. With the King’s Speech on May 13 expected to address “Global Technological Ethics,” the US move to “peek under the hood” of Big Tech’s most secret projects is the most significant “recalibration” of the industry since the birth of the internet. Whether this “clinical” oversight ensures safety or acts as a “bottleneck” on innovation remains the $850 billion question.